At its 2025 conference in Las Vegas, AWS announced that future versions of its custom AI chips will integrate Nvidia’s NVLink Fusion interconnect, aiming to build larger, faster clusters for training massive AI models.

This marks AWS as one of the first cloud providers outside Nvidia to adopt NVLink, joining earlier sign-ups such as Intel and Qualcomm.

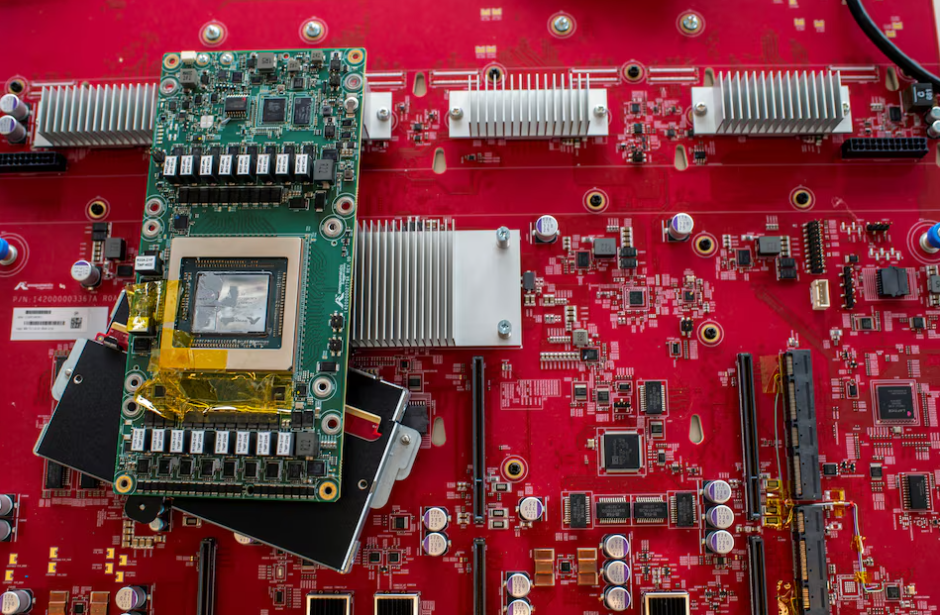

Meanwhile, AWS rolled out new servers powered by its existing AI chip, Trainium3. Each server packs 144 chips and reportedly delivers over four times the computing power of AWS’s previous-generation infrastructure, while using roughly 40% less power.

With this dual move combining in-house silicon with Nvidia interconnect tech — AWS is doubling down on its ambition to offer competitive, scalable AI infrastructure.

If the improved chip interconnect and power efficiency deliver as promised, the shift could reshape the AI-cloud landscape, reducing reliance on expensive GPU-only servers and opening AI infrastructure to a broader customer base.

Chinese blockade halts NVIDIA H200 component production

Chinese blockade halts NVIDIA H200 component production  NVIDIA CEO Jensen Huang Criticizes AI Doomsaying as Harmful to Industry and Society

NVIDIA CEO Jensen Huang Criticizes AI Doomsaying as Harmful to Industry and Society  ByteDance Plans $14 Billion Spend on Nvidia AI Chips in 2026 Amid Surging Demand

ByteDance Plans $14 Billion Spend on Nvidia AI Chips in 2026 Amid Surging Demand  Nvidia to Boost H200 Chip Production Amid Uncertainty Over China Market

Nvidia to Boost H200 Chip Production Amid Uncertainty Over China Market  China’s ‘Little Nvidia’ Moore Threads Warns of Overheating Risks After 723% Share Surge

China’s ‘Little Nvidia’ Moore Threads Warns of Overheating Risks After 723% Share Surge  Nvidia CEO slams managers resisting AI push, demands full automation of tasks

Nvidia CEO slams managers resisting AI push, demands full automation of tasks